- The Prohuman

- Posts

- xAI is rebuilding after losing most founders, again!

xAI is rebuilding after losing most founders, again!

Plus: Spotify finally lets you fix your music algorithm

Hello, Prohuman

Today, we will talk about these stories:

Musk says xAI “was not built right”

Databricks buys a tool to monitor AI agents

Spotify lets users edit their algorithm profile

1,000+ Proven ChatGPT Prompts That Help You Work 10X Faster

ChatGPT is insanely powerful.

But most people waste 90% of its potential by using it like Google.

These 1,000+ proven ChatGPT prompts fix that and help you work 10X faster.

Sign up for Superhuman AI and get:

1,000+ ready-to-use prompts to solve problems in minutes instead of hours—tested & used by 1M+ professionals

Superhuman AI newsletter (3 min daily) so you keep learning new AI tools & tutorials to stay ahead in your career—the prompts are just the beginning

xAI keeps resetting while rivals move ahead

Only two of xAI’s original 11 co-founders are still at the company.

Several more senior engineers left this week as Elon Musk said the lab is being rebuilt because it “was not built right the first time.” The immediate problem is coding tools, where xAI is trailing Anthropic’s Claude Code and OpenAI’s Codex, an area many labs see as the clearest path to real revenue.

LinkedIn shows xAI with a little over 5,000 employees, compared with about 7,500 at OpenAI and roughly 4,700 at Anthropic.

This looks less like a clean rebuild and more like a lab struggling to find its product footing while competitors ship tools developers already use every day.

Coding assistants pay the bills, and falling behind there puts pressure on everything else xAI is trying to build. Musk is still chasing a bigger idea called Macrohard, an agent meant to handle white-collar computer work, though the project already lost its leader and is reportedly paused.

You can almost picture the late-night meetings and whiteboards as teams try to restart pieces of the plan. How many rebuilds can an AI lab do before the market moves on?

AI agents are harder to trust than deploy

Image Credits: Databricks

Companies can launch AI agents today, but few know how well they actually behave once they start running.

Databricks just acquired Quotient AI, a startup built by engineers who previously worked on improving GitHub Copilot’s code quality. The system analyzes full agent traces in production and flags problems like hallucinations, reasoning errors, or incorrect tool use across platforms such as Genie, Genie Code, and Agent Bricks.

This deal points to a problem people building AI agents already see in production. Getting an agent to run is straightforward; figuring out why it fails after thousands of real user interactions is still messy work.

Quotient’s approach matters because it treats agent behavior like telemetry, collecting signals and feeding them back into training loops.

If this works, Databricks could become the place where enterprises run and monitor agents tied to their internal data. The quiet sound here is the logging pipeline humming at 2 a.m. while the system sorts through thousands of traces.

The next question is simple: who owns the debugging layer for AI agents?

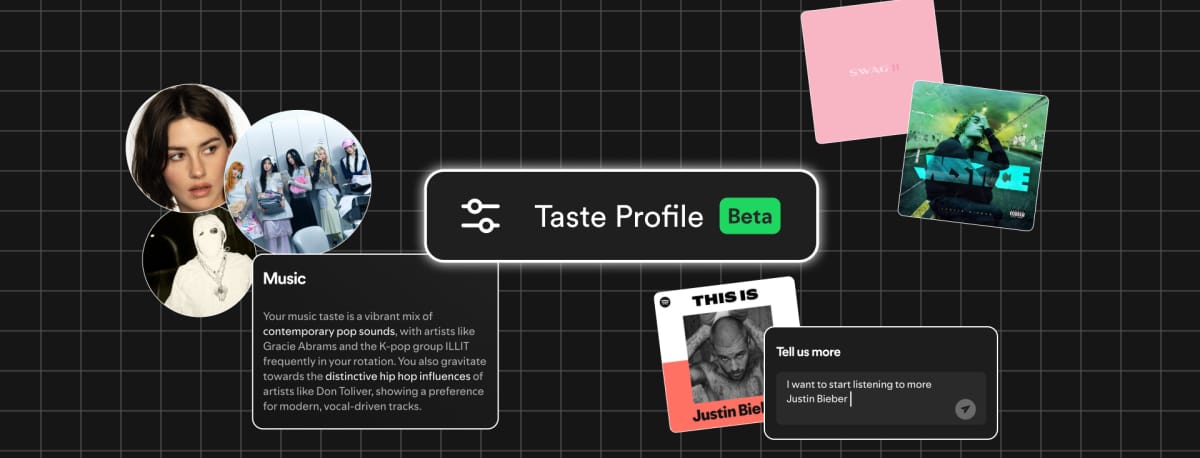

Spotify gives users control over their recommendation model

Image Credits: Spotify

Spotify is letting users directly edit the data that shapes their recommendations.

The company announced at SXSW that listeners will soon be able to view and modify their “Taste Profile,” the internal model that powers features like Discover Weekly and Spotify Wrapped. Premium users in New Zealand will see the beta first, with tools that let them adjust recommendations using natural language prompts inside the app.

This is a quiet admission that recommendation systems drift in ways users cannot easily fix. Shared speakers, kids using the account, and background audio slowly distort the profile that drives the algorithm.

Giving people a control panel for that model is overdue. If users start correcting their profiles directly, Spotify gets cleaner signals about what people actually want to hear. Late at night, when someone plays sleep sounds or children’s songs through the living room speaker, the algorithm no longer has to treat that as permanent taste.

The bigger question is whether users will actually manage their algorithm, or ignore it the way most people ignore settings menus.

Prohuman team

Covers emerging technology, AI models, and the people building the next layer of the internet. |  Founder |

Writes about how new interfaces, reasoning models, and automation are reshaping human work. |  Founder |

Free Guides

Explore our free guides and products to get into AI and master it.

All of them are free to access and would stay free for you.

Feeling generous?

You know someone who loves breakthroughs as much as you do.

Share The Prohuman it’s how smart people stay one update ahead.