- The Prohuman

- Posts

- Inside OpenAI’s latest threat report

Inside OpenAI’s latest threat report

Plus: Does being polite to AI matter?

Hello, Prohuman

Today, we will talk about these stories:

How threat actors are using AI

Google pulls Intrinsic closer

What actually improves AI answers

Meet America’s Newest $1B Unicorn

A US startup just hit a $1 billion private valuation, joining billion-dollar private companies like SpaceX, OpenAI, and ByteDance. Unlike those other unicorns, you can invest.

Over 40,000 people already have. So have industry giants like General Motors and POSCO.

Why all the interest? EnergyX’s patented tech can recover up to 3X more lithium than traditional methods. That's a big deal, as demand for lithium is expected to 5X current production levels by 2040. Today, they’re moving toward commercial production, tapping into 100,000+ acres of lithium deposits in Chile, a potential $1.1B annual revenue opportunity at projected market prices.

Right now, you can invest at this pivotal growth stage for $11/share. But only through February 26. Become an early-stage EnergyX shareholder before the deadline.

This is a paid advertisement for EnergyX Regulation A offering. Please read the offering circular at invest.energyx.com. Under Regulation A, a company may change its share price by up to 20% without requalifying the offering with the Securities and Exchange Commission.

AI misuse is rarely one-tool work

Image Credits: Open AI

Threat actors are mixing AI models into existing playbooks, not building entirely new ones.

OpenAI’s February 25, 2026 report outlines case studies from the past two years showing how malicious groups use AI alongside websites and social media accounts. In one example, a Chinese influence operator used multiple AI models at different points in its workflow rather than relying on a single system.

This confirms something important. AI is becoming a utility inside coordinated campaigns, folded into existing operations that were already running across platforms and tools.

The more useful insight is operational: misuse is distributed, which means tracking it requires watching patterns across services rather than focusing on one model or one provider at a time.

That raises the bar for detection and collaboration, because enforcement cannot sit with a single company when campaigns move between models and public platforms in the same afternoon.

It also suggests these actors see AI as interchangeable infrastructure. If AI is just another tool in the stack, who is responsible for stopping the full stack?

Google wants AI in factories

Image Credits: Intrinsic

Google is folding its robotics software arm closer to home.

Alphabet-owned Intrinsic, spun out of X in 2021, is joining Google while staying a distinct entity and working with DeepMind and Gemini models. The company builds software to make industrial robots easier to program, launched Flowstate in 2023, and released a Vision AI model in late 2025.

This is Google admitting that models alone are not the endgame. If AI is going to justify its cost, it has to run machines that produce things, and Intrinsic gives Google a path into factories without starting from scratch.

Intrinsic already partnered with Foxconn in October 2025 to push toward full factory automation, and now it gets direct access to Google’s cloud and AI stack. Picture a factory floor at 6am, conveyor belts humming, cameras feeding data into Gemini-backed systems trained to adjust in real time.

If physical AI is the next revenue wave, how many tech giants will follow Google onto the factory floor?

Stop managing AI’s feelings

Image Credits: BBC

Calling a chatbot “smart” does almost nothing.

Researchers tested whether flattery, politeness, or even insults improved AI accuracy, and results were inconsistent, sometimes even contradictory. One 2024 study found polite prompts helped, another found insults worked better, and a separate experiment showed pretending to be on Star Trek improved basic maths performance.

The bigger shift is that newer models like ChatGPT, Gemini, and Claude are better at identifying what matters in a prompt, so tone tweaks are mostly noise.

If you want better output, the practical advice is simple: ask for three to five options, give real examples of what you want, and let the model interview you one question at a time before it answers.

Role-playing can help with brainstorming or mock interviews, though it can push the model toward overconfidence when facts matter. Seventy percent of people say “please” anyway, often out of habit, fingers tapping on a phone screen late at night.

If the model is just a tool, why are so many of us still talking to it like a person?

Prohuman team

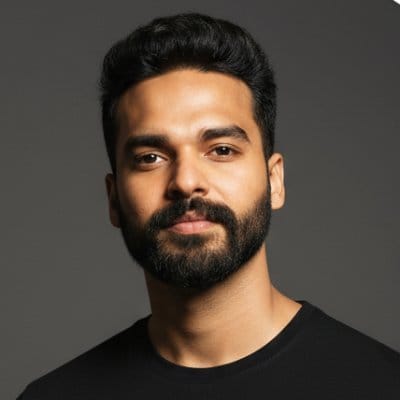

Covers emerging technology, AI models, and the people building the next layer of the internet. |  Founder |

Writes about how new interfaces, reasoning models, and automation are reshaping human work. |  Founder |

Free Guides

Explore our free guides and products to get into AI and master it.

All of them are free to access and would stay free for you.

Feeling generous?

You know someone who loves breakthroughs as much as you do.

Share The Prohuman it’s how smart people stay one update ahead.