- The Prohuman

- Posts

- Google pushes AI closer to the device

Google pushes AI closer to the device

Plus: Anthropic gets deeper into enterprise AI

Hello, Prohuman

Today, we will talk about these stories:

Arm CPUs get a real AI workload

Washington and Beijing talk AI risks

PwC puts Claude into client work

Your agent needs more than 2 projects

You prompt. The agent builds. Then it asks for a database.

Ghost is postgres made for this. Spin one up in seconds. Fork it like a branch. Delete it when you're done. Pay nothing when it's idle.

Your agent gets full sql, mcp support, as many databases. No dashboards. No provisioning. No forgotten dev databases draining your card at month end.

Build a weekend app. Fork the schema three different ways. Throw two of them out. Ghost doesn't care. The next prompt can spin up a fresh one.

You're already vibe-coding the app. Stop wiring up the backend.

Unlimited databases. Unlimited forms. 100 compute/hrs a month. 1tb of storage. Free.

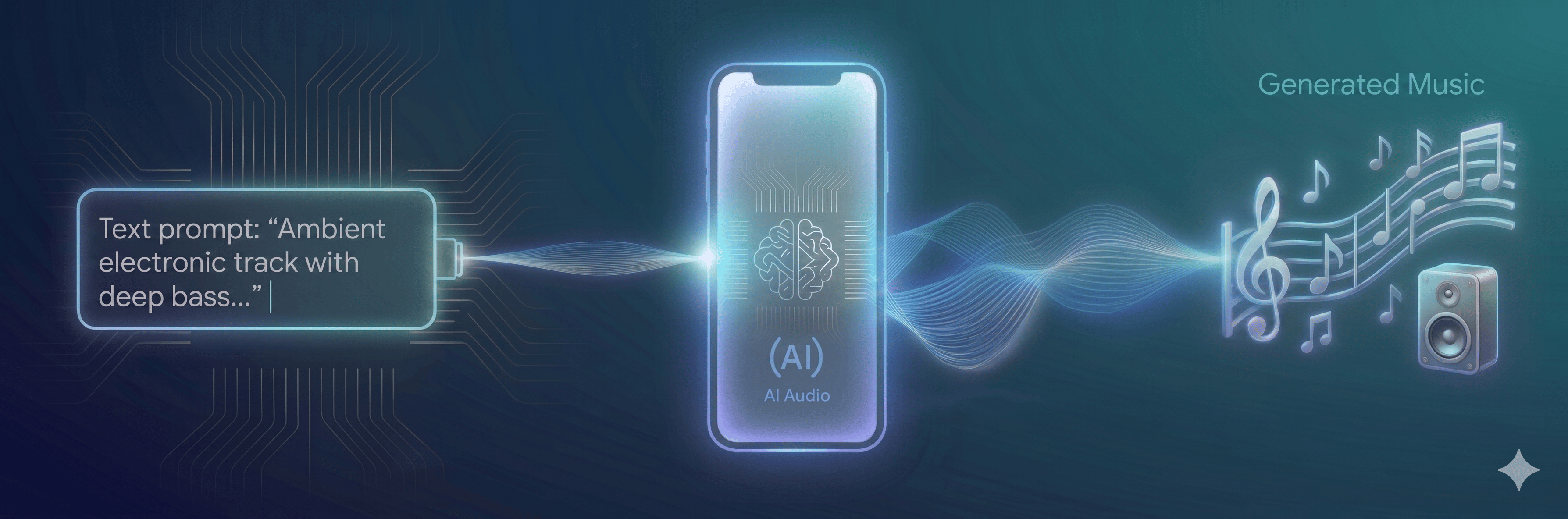

On-device AI is getting less theoretical

Image Credits: Google

Google says an Arm CPU can now generate 11 seconds of audio in under 8 seconds.

Google and Arm are showing how LiteRT, XNNPACK, KleidiAI, and Arm’s SME2 can speed up AI inference directly on CPUs. The demo uses Stability AI’s stable-audio-open-small model, converted from PyTorch, quantized into mixed FP16 and INT8, then run on Android and Mac hardware.

The numbers are useful.

Generation time dropped from 14 seconds to 6.6 seconds on an SME2-based Android device with one thread, while the DiT submodel became roughly 4x smaller. My read is that this matters because CPU-based AI gives developers a broader path than waiting for every phone to have the same specialized accelerator.

This could make local image, audio, and assistant features easier to ship across more devices, especially when latency, privacy, or connectivity matter. It also moves the developer work into familiar tools, with Model Explorer, quantization recipes, and C++ buffers sitting close to the metal.

The next test is whether developers can keep quality high when these models leave the demo bench.

The AI race now needs rules

Image Credits: The Economic Times

Bessent said the two AI superpowers are finally going to talk.

Speaking from Beijing, Treasury Secretary Scott Bessent said the U.S. and China will discuss AI guardrails, including protocols to keep powerful models away from nonstate actors. He did not say when the talks would start.

The timing matters.

The striking part is that Bessent framed cooperation around U.S. advantage, saying China is “substantially behind us” and that American best practices should shape the global approach. That makes the safety conversation useful, but also fragile, because both sides still see AI as a race they cannot afford to slow.

The shared concern is clear enough: hackers, terrorists, and possible biological weapons. But the room in Beijing still has the feel of a hard table under bright lights, with each side watching what the other gives away.

Can rivals build rules while racing past them?

PwC is turning Claude into delivery infrastructure

Image Credits: Anthropic

PwC says Claude is already running inside real client work.

PwC and Anthropic are expanding their alliance, with PwC rolling out Claude Code and Cowork across U.S. teams first, then toward a global workforce of hundreds of thousands. The firms will also create a joint Center of Excellence and train and certify 30,000 PwC professionals on Claude.

This is not small.

The useful part is the production detail: underwriting cut from ten weeks to ten days, cybersecurity response moving from hours to minutes, and HR work handling thousands of daily transactions. My read is that PwC is using Anthropic less like a software vendor and more like a new work layer for consulting, deals, finance, and engineering.

That could pressure other services firms to show proof beyond demos, especially in regulated sectors where trust and compliance decide adoption. It also puts Claude closer to the ordinary objects of office work, like spreadsheets, documents, and presentation files open on a desk.

The open question is how much of this becomes durable client value, and how much becomes consulting firms selling faster delivery.

Prohuman team

Covers emerging technology, AI models, and the people building the next layer of the internet. |  Founder |

Writes about how new interfaces, reasoning models, and automation are reshaping human work. |  Founder |

Free Guides

Explore our free guides and products to get into AI and master it.

All of them are free to access and would stay free for you.

Feeling generous?

You know someone who loves breakthroughs as much as you do.

Share The Prohuman it’s how smart people stay one update ahead.