- The Prohuman

- Posts

- Cursor’s model reveal raises questions

Cursor’s model reveal raises questions

Plus: Enterprise AI is moving past copilots

Hello, Prohuman

Today, we will talk about these stories:

Cursor didn’t build its model from scratch

This startup wants AI in your meetings

New Jersey moves on AI disclosure rules

Your Docs Deserve Better Than You

Hate writing docs? Same.

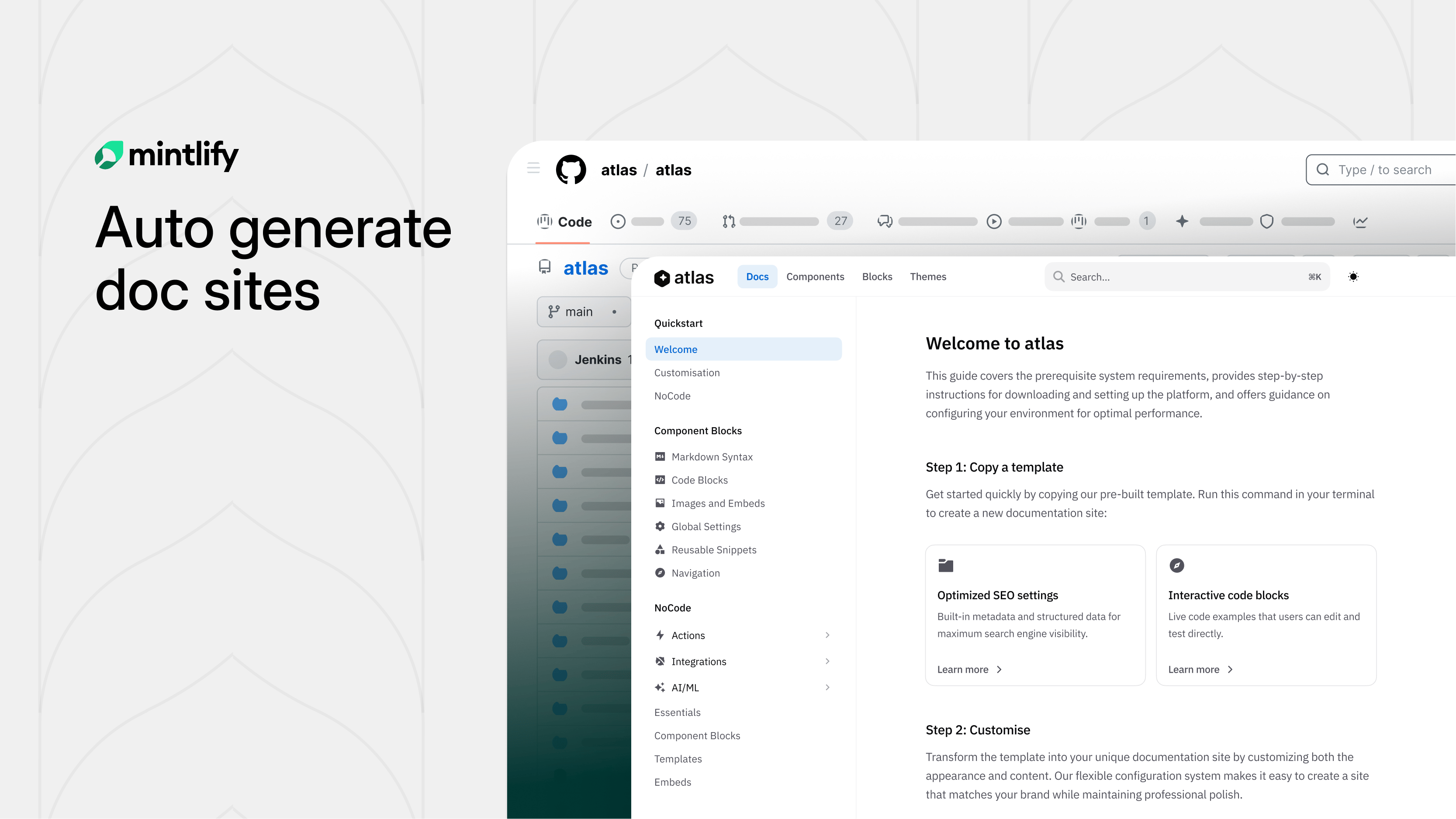

Mintlify built something clever: swap "github.com" with "mintlify.com" in any public repo URL and get a fully structured, branded documentation site.

Under the hood, AI agents study your codebase before writing a single word. They scrape your README, pull brand colors, analyze your API surface, and build a structural plan first. The result? Docs that actually make sense, not the rambling, contradictory mess most AI generators spit out.

Parallel subagents then write each section simultaneously, slashing generation time nearly in half. A final validation sweep catches broken links and loose ends before you ever see it.

What used to take weeks of painful blank-page staring is now a few minutes of editing something that already exists.

Try it on any open-source project you love. You might be surprised how close to ready it already is.

A new model built on someone else’s work

Image Credits: Cursor

The model name gave it away.

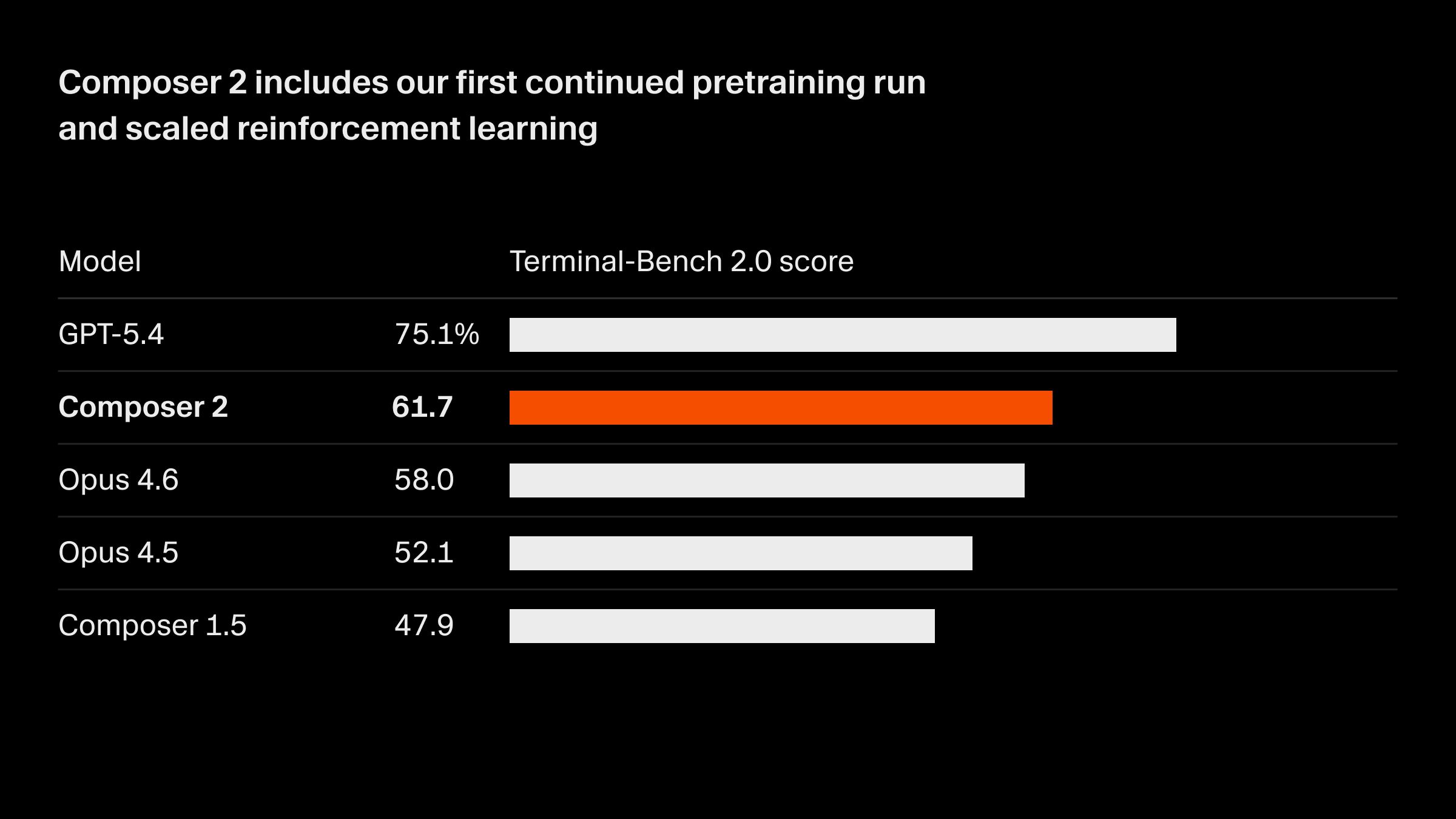

Cursor launched Composer 2 as a top-tier coding model, then admitted it was built on top of Moonshot AI’s open-source Kimi 2.5 after users spotted references in the code.

The company says only about 25% of the compute came from the base model, with the rest from its own training, and claims the final performance is meaningfully different.

This is normal practice.

What stands out is the omission, especially from a company valued at $29.3 billion and already doing over $2 billion in annualized revenue, because it suggests the branding still leans heavily on the idea of building from scratch.

There is also a geopolitical layer here, since the base model comes from a Chinese lab and that detail was left out until someone else surfaced it. You can hear the fan spin on a laptop running code generation, steady and loud.

If more companies quietly build on open models, the real competition shifts from who trains the base model to who refines, packages, and sells it best. How many “new” models are just layers on top?

AI tools are starting to act like coworkers

Image Credits: Trugen AI

It joins your meetings.

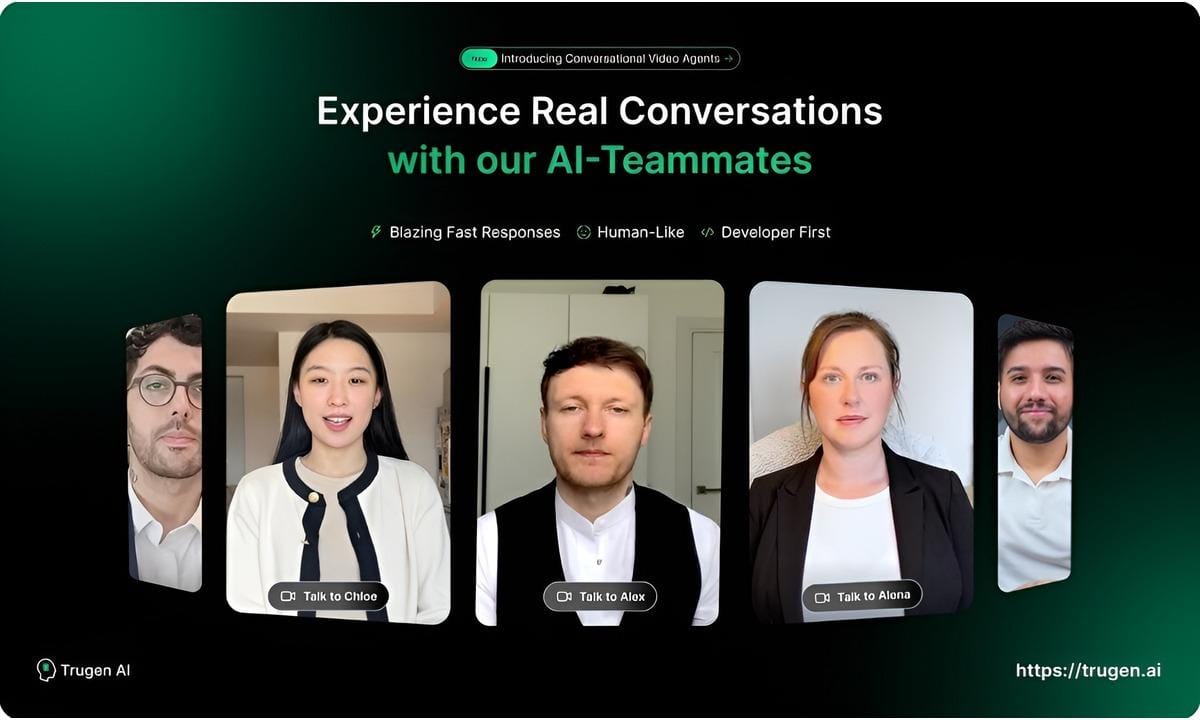

TruGen AI says its new “AI Teammates” can sit in video calls, run workflows, and retain company knowledge over time, with deployment inside enterprise AWS environments and compliance like SOC 2 and HIPAA.

The pitch is a shift from copilots that suggest work to systems that actually do it, across sales demos, hiring screens, and customer onboarding.

This feels early. The capabilities sound familiar if you’ve seen agents and avatar tools stitched together, but packaging it as a persistent “teammate” with memory is a stronger claim that will be hard to prove inside messy real organizations.

There is also a trust gap, since giving something access to meetings, internal systems, and long-term memory raises real governance and accuracy issues that compliance labels alone do not solve.

You can picture a laptop open in a conference room, camera light on, an AI face talking. If this works even halfway, companies will start measuring output per team differently and rethink hiring at the margins. Do people treat this like a tool or a colleague?

AI labels are becoming mandatory

The fine starts at $6,000.

New Jersey lawmakers advanced a seven-bill package to regulate AI across political ads, business interactions, and companion chatbots, with disclosure requirements and penalties for violations.

One bill forces clear labels on AI-generated campaign content, another requires businesses and chatbot operators to tell users when they are interacting with AI, and others cover professional use, real estate ads, and child safety.

This is overdue. The interesting part is how broad it is, because it treats AI less like a niche tech issue and more like basic consumer protection that should apply anywhere people could be misled or manipulated.

The political ad rule matters most, since one AI-heavy campaign video already pulled over 5 million views and showed how fast synthetic content can spread before voters even know what they are looking at.

Other states will copy this. If disclosures become standard, the next fight is whether labels actually change behavior or just become ignored fine print.

Prohuman team

Covers emerging technology, AI models, and the people building the next layer of the internet. |  Founder |

Writes about how new interfaces, reasoning models, and automation are reshaping human work. |  Founder |

Free Guides

Explore our free guides and products to get into AI and master it.

All of them are free to access and would stay free for you.

Feeling generous?

You know someone who loves breakthroughs as much as you do.

Share The Prohuman it’s how smart people stay one update ahead.